yes this should work! the reason for this is that meshes can be composed of n-gons – not just tris – so we need more information to know how many indices to grab from the array to form the next face

Thanks.

I actually followed the hint from ConvertMeshUtils.cs Line 299 and used 0 as a triangle flag.

Now that has some sweet mesh

This geometry is now cool but jittery which is I guess a distance from zero issue. Is there an OOTB transform to zero routine to use / recommended. I know I’ve seen the select which origin to use for Revit discussion. I can go dig there.

fab! and yes, the jitter is prob the distance from zero issue which will be resolved in the viewer at some point soon.

re the 0 tri flag, we used to only support tris (0) and quads (1) in meshes, but have upgraded to allow any n-gons. this upgrade led us to go with 3 and 4 as the more logical tris and quads flag, though everything remains backwards compatible. if you’re building something new though, I’d probably suggest using 3 as the flag as the 0 and 1 flags will prob be depreciated in the future.

Yas, spatial jitter is solved now by @alex! It wasn’t exactly easy:

We’ll be pushing a new viewer release soon™️.

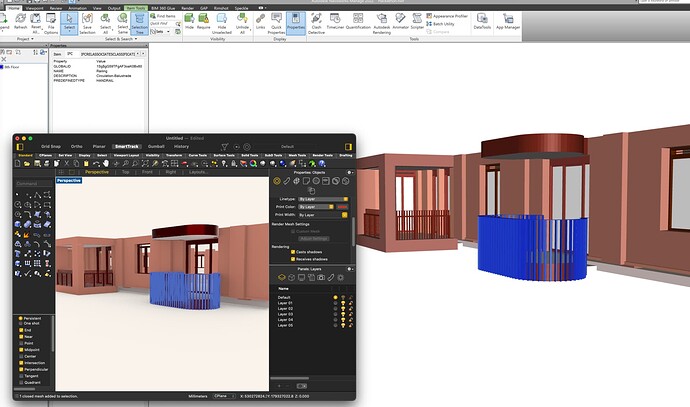

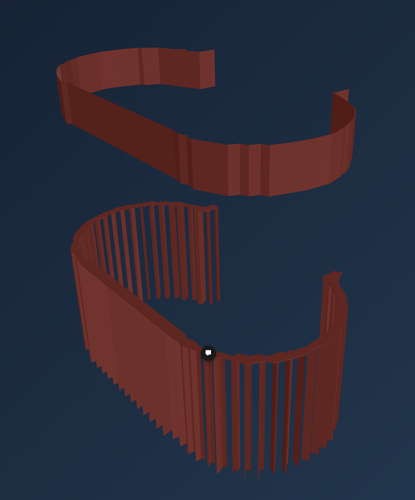

Jitter aside I’m seeing that I may be seeing impacts on meshes and accuracy possibly within my conversion.

Mesh fidelity maybe a distance from zero thing or a simply oddness in a matrix transformation I’m applying precommit.

Reading in to GH still slight oddness (might be float vs double issue)

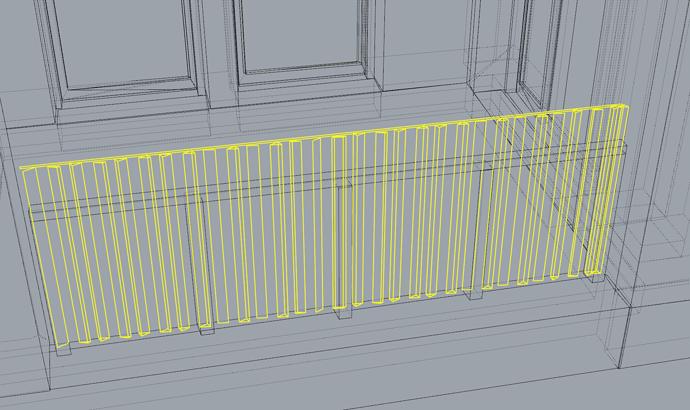

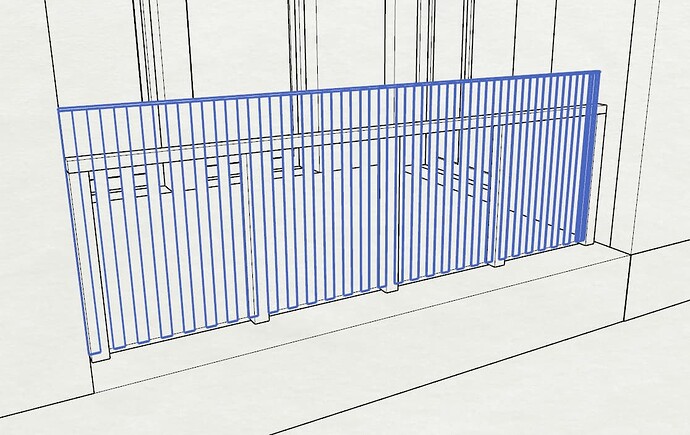

The original flatbar in Navisworks

Hi @jonathon

Indeed, the same fp32 precision issues (distance from 0 thing) that cause jittering will also cause poor mesh fidelity. In extreme cases, very bad ones.

Thanks, @alex, @dimitrie & @izzylys

I’m parking this for now. However, I have audited the imported meshes and the vertical fidelity is intact, so I will log an issue to myself to resolve this somehow when I have more bandwidth. Or there are leet codez to steal from the speckle team

yeah, it looks a floating-point error (the fact that Z coordinates are correct points also in that direction). Maybe speckle for Rhino/GH is not using double precision meshes?

BTW, working far from origin has been always a source of problems in Rhino, some of their command will create artifacts of every kind

Update

I have fixed my geometry conversion routines which were, as suggested, all in the fidelity of conversion from compressed coordinate space and the matrix calculation per mesh to bring into real coordinate space.

Just waiting on the anti-jitter upgrade

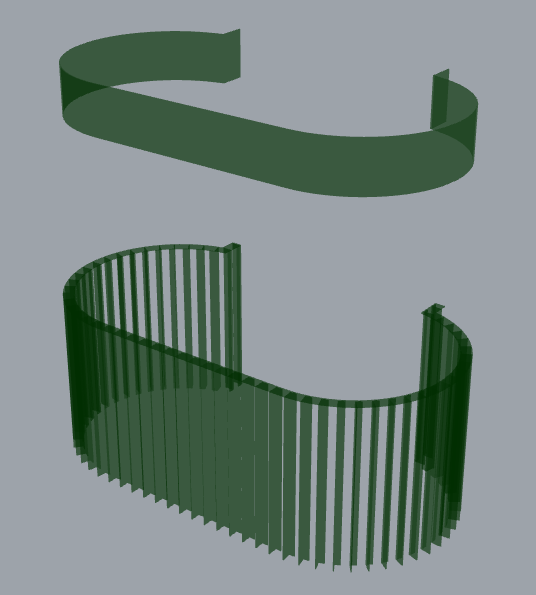

Great stuff! It does look much better than before. Also happy to report the anti jitter meds that @alex cooked up are gone!

We’ve just pushed an update to latest, and i console-log hacked the viewer to load that commit there:

Correct meshes & buttery smooth spinning

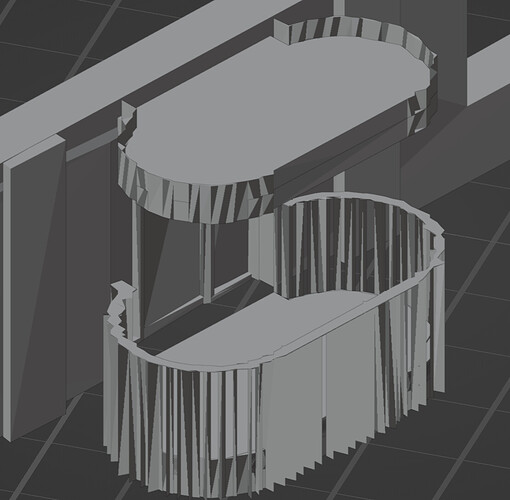

No jitter on latest  but the fidelity of geometry in the viewer isn’t quite right.

but the fidelity of geometry in the viewer isn’t quite right.

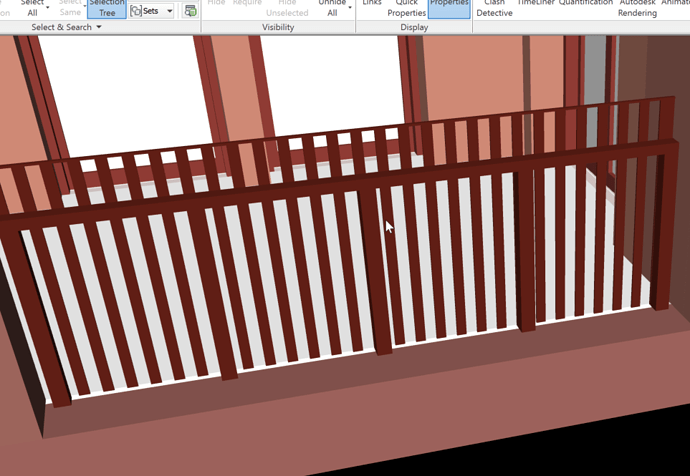

The good news is that the Connectors read it correctly:

Hi @jonathon

There is something wrong indeed there. I’ll be looking into this and I’ll keep you posted!

FWIW. Blender seems equivalently borked.

For now (because I don’t have any other needs) I will store a transform matrix from bbox centre to rw coords as a property and commit with bbox centred at 0,0,0.

have not investigated this mesh in particular, but blender does not support double precision coordinates so if precision is the issue here then it’ll definitely also be the issue in blender!

Yup, I assumed as much. Hence the fallback option to abandon (but record) the transform.

A million years ago when I was first dealing with the differences between AutoCAD and Microstation for rail projects, the Bentley approach was a dynamic memory solution to maintain the accuracy for the real-world (sort of like a floating bbox window to the data - more complicated than that as it maintained accuracy over kilometres) whereas back then AutoCAD was similarly borked.

plus ça change, plus c’est la même chose

Hi @jonathon

I’ve looked into this a bit more. There may be a solution which I’m very curious to try out. I’ll let you know about the results soon(-ish)

I have added a relocate bounding-box-centre > world-zero routine behind an option flag.

Everything that you’ve been doing has it all tip-top now.

Once the ideas for Setting Out Points or Transforms or whatever settle, I can amend it to fit whatever schema it ends up with.

Hi @jonathon

Awesome! The viewer will also be translating the entire content closer to the world origin soon as part of one of the next updates.

However I’ll also be trying the idea for a solution I mentioned in my previous response just out of curiosity. I just need to make a bit of time for it

I’ll let you know what comes out of it!

Hi @jonathon

So I’ve looked into the matter found the problem(s) and fixed them

There are two issues in the current implementation:

Our current RTE implementation is very much large-range biased when it comes to emulating the double precision vertex positions. We encoded double precision values as two separate floats. When you normally do this, there are multiple ways in which you can encode them, depending if you want a higher range, or a higher precision. It’s impossible to have both the highest range and the highest precision at the same time. You can however give up range in favor of precision, and vice-versa. Our current RTE implementation is highly in favor of range currently. Much to highly actually… This makes precision suffer greatly, and that’s why you got that poor quality mesh as a result

The second issue is less complicated, and it’s to do with how three.js stores the vertex position values in it’s buffer attributes. It casts them down to float before we get a change to do the high/low split, meaning we’re currently splitting float values, which again hurts precision. Complementary to this, three’s implementation for computing the vertex normals, use the position buffer attribute content as the source for generating them. Since the buffer attribute is already casted down to float, the normals also get messed up by default, but that’s an easy fix, since we can compute the normals ourselves using the double vertex positions

Regarding the first issue, I’m thinking of having a dynamic rage/precision encoding depending on the world size. We will add this feature in one of the next releases. Regarding the second issue, we’ll simply compute the normals ourselves and make sure we split the double value, not the casted down one.

Sounds like you have another speckle performance blog post in your future.