I’m running into an issue while trying to deploy a new instance of Speckle Server (2.16.0 with frontend 1) into an EKS cluster on AWS. I’ve deployed speckle-server successfully on AWS a few times, but wanted to start with a fresh cluster with this release.

The /graphql endpoint doesn’t seem to be responding. Any requests to /graphql are timing out, including the liveness/readiness probes on the pod. This means that the speckle-server pods, while running, aren’t Ready. I’ve tried to launch older releases on a fresh cluster and I’ve been running into the same problem. This makes me think it’s my cluster configuration, but I’m not sure how it could be causing this error since the probe requests are made from the speckle-server pod to itself and wouldn’t be impacted by any security group settings or ingress/dns/certificate issues.

Additional context:

I’ve deployed a nearly identical configuration locally and it works without any issues. The only real differences in the local deployment are that it doesn’t require TLS, PGSSL and uses letsencrypt-staging.

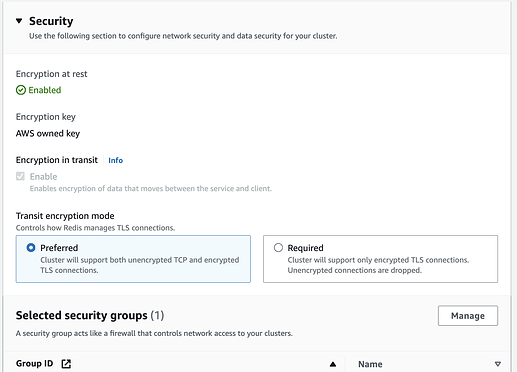

My postgres and redis resources are in the same VPC and share the VPC’s default security group. I’ve also tried adding them to the nodegroup groups in case there was an issue with the connection between PG and the server timing out.

I’ve tried toggling the networkPolicy and serviceAccount properties for all of the containers just in case that’s related.

The landing page loads to the “Interoperability in seconds” screen without a login and throws the errors you’d expect:

-

WebSocket connection to 'wss://speckle.<my_domain>.app/graphql' failed -

POST https://speck.<my_domain>.app/graphql 503 (Service Unavailable)

All other pods are Running except except speckle-webhook-service and speckle-preview-service which are stuck in Pending.

Here’s the specific error in the speckle-server pod’s log:

{"level":"info","time":"2023-10-19T19:05:55.474Z","req":{"id":"36601963-d742-4c3f-b1a1-ba8757fcdb44","method":"GET","path":"/graphql","headers":{"content-type":"application/json","host":"127.0.0.1:3000","connection":"close","x-request-id":"36601963-d742-4c3f-b1a1-ba8757fcdb44"}},"res":{"statusCode":null,"headers":{"x-request-id":"36601963-d742-4c3f-b1a1-ba8757fcdb44","access-control-allow-origin":"*"}},"responseTime":9704,"msg":"request aborted"}

Here’s the rest of the startup log in speckle-server, which looks completely normal to me:

{"level":"info","time":"2023-10-19T19:05:39.828Z","phase":"db-startup","msg":"Loaded knex conf for production"}

{"level":"info","time":"2023-10-19T19:05:42.739Z","component":"modules","msg":"💥 Init core module"}

{"level":"info","time":"2023-10-19T19:05:42.909Z","component":"modules","msg":"🔔 Initializing postgres notification listening..."}

{"level":"info","time":"2023-10-19T19:05:42.910Z","component":"modules","msg":"🔑 Init auth module"}

{"level":"info","time":"2023-10-19T19:05:42.972Z","component":"modules","msg":"💅 Init graphql api explorer module"}

{"level":"info","time":"2023-10-19T19:05:42.972Z","component":"modules","msg":"📧 Init emails module"}

{"level":"info","time":"2023-10-19T19:05:43.742Z","component":"modules","msg":"♻️ Init pwd reset module"}

{"level":"info","time":"2023-10-19T19:05:43.742Z","component":"modules","msg":"💌 Init invites module"}

{"level":"info","time":"2023-10-19T19:05:43.746Z","component":"modules","msg":"📸 Init object preview module"}

{"level":"info","time":"2023-10-19T19:05:43.747Z","component":"modules","msg":"📄 Init FileUploads module"}

{"level":"info","time":"2023-10-19T19:05:43.747Z","component":"modules","msg":"🗣 Init comments module"}

{"level":"info","time":"2023-10-19T19:05:43.747Z","component":"modules","msg":"📦 Init BlobStorage module"}

{"level":"info","time":"2023-10-19T19:05:43.818Z","component":"modules","msg":"📞 Init notifications module"}

{"level":"info","time":"2023-10-19T19:05:43.818Z","component":"modules","msg":"📞 Initializing notification queue consumption..."}

{"level":"info","time":"2023-10-19T19:05:43.833Z","component":"modules","msg":"🤺 Init activity module"}

{"level":"info","time":"2023-10-19T19:05:43.837Z","component":"modules","msg":"🔐 Init access request module"}

{"level":"info","time":"2023-10-19T19:05:43.837Z","component":"modules","msg":"🎣 Init webhooks module"}

{"level":"info","time":"2023-10-19T19:05:43.837Z","component":"modules","msg":"🔄️ Init cross-server-sync module"}

{"level":"info","time":"2023-10-19T19:05:43.837Z","component":"modules","msg":"🤖 Init automations module"}

{"level":"info","time":"2023-10-19T19:05:43.971Z","component":"cross-server-sync","msg":"⬇️ Ensuring base onboarding stream asynchronously..."}

{"level":"info","time":"2023-10-19T19:05:43.971Z","component":"cross-server-sync","msg":"Ensuring onboarding project is present..."}

{"level":"info","time":"2023-10-19T19:05:45.350Z","phase":"startup","msg":"🚀 My name is Speckle Server, and I'm running at 0.0.0.0:3000"}